Floating Point Numbers

Today, I learnt about floats. I learnt about what they are, how they work, and why you’d use them.

#Python factlet: Signed zeros are weird.

— Raymond Hettinger (@raymondh) August 11, 2023

>>> x = -0.0

>>> x + x

-0.0

>>> x - (-x)

-0.0

>>> math.sqrt(x)

-0.0

>>> pow(x, 0.5)

0.0

Interestingly, all of this is necessary to comply with floating point standards.

What is a floating point? 🔗︎

Most folks programming are familiar with floats. They are a type of number. Someone who doesn’t know much about floats might say something like

I use int’s when I need a whole number, and a float when I need a decimal!

This is mostly true, and definitely, you will need to use floats to represent decimals.

Today, we will go a layer deeper.

What actually is a float?

Motivation for Floats 🔗︎

I have a 32 bit integer. That means I have 32 1’s or 0’s.

The largest number I can represent here is $$ 2^{32} = 4,294,967,296 $$ Say I even go bigger, and I want a huge number, with 64 bits. $$ 2^{64} = 18,446,744,073,709,551,616 \approx 1.8\times 10^{19} $$ This is getting large, we are up to a quintillion. Large as it may seem, we might need even larger numbers, and our space use is starting to become a problem.

What if we could represent even bigger numbers using less bits?

This is where floats come in.

The largest 32-bit float can represent is \(3.8\times 10^{38}\). That’s twice the orders of magnitude as the \(2^{64}\) number, with half the bits!

Clearly, there is some benefit to the computer storing floats in this way.

So, how does this actually work?

Floating Details 🔗︎

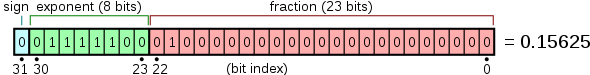

Floats were first described in IEEE 745 and consist of three parts

- sign

- exponent

- fraction (otherwise known as the “mantissa”)

Each of these combines together to produce a floating point number.

See the below diagram, from Wikipedia.

Each of these parts has an important role to play in describing the floating point number.

The float described in the diagram is a single-precision floating-point. That is with fields s=1, exp=8 and frac=23.

In a double-precision float, we would have s=1, exp=11 and frac=52 (64 bit representation).

Float Calculations 🔗︎

Float’s aren’t just bits, they do make base 10 numbers.

To convert a float to base 10, we can use the following formula.

Here’s the formula to convert the bit representation to a floating-point number: $$ (−1) \times sign \times (1+fraction) \times 2 ^{(exponent−127)} $$ Where:

- Sign is the sign bit.

- Fraction is the value represented by the mantissa in base-2.

- Exponent is the value represented by the exponent bits in base-2 minus the bias (127 for single precision).

This python code shows the conversion.

def bits_to_float(bit_string):

# Make sure the string is 32 bits long

assert len(bit_string) == 32

# Extract sign, exponent, and fraction bits

sign_bit = int(bit_string[0], 2)

exponent_bits = int(bit_string[1:9], 2)

fraction_bits = bit_string[9:]

# Compute the sign, -1 if sign_bit is 1, otherwise 1

sign = -1 if sign_bit == 1 else 1

# Compute the exponent

exponent = exponent_bits - 127

# Compute the fraction

fraction = 1.0 # start with the implicit leading bit

for i, bit in enumerate(fraction_bits):

if bit == '1':

fraction += 2 ** (-i-1)

# Compute the float value

float_val = sign * fraction * (2 ** exponent)

return float_val

# Example usage:

bit_representation = "01000000101000111101011100001010"

print(bits_to_float(bit_representation))

The exponent allows us to get very large numbers, while the mantissa also gets us some precision.

Why the -127? 🔗︎

We can get negative numbers with the sign bit, what benefit could there be to having the -127 bias in the exponent?

According to CS:APP[^2], we do need this bias for a few reasons.

When the exponent is all zero’s or all ones, it is considered to be in ‘denormalised’ form. All zero’s indicates the number 0, and all ones indicates the NaN or infinity.

Normalised numbers always have the implicit leading bit for the mantissa, so it is not possible to represent the number 0.

Another benefit of the bias is that it allows even greater granularity of numbers closer to zero, but does sacrifice some more range.

Why Not Floats 🔗︎

The below image is from CS:APP[^2].

Floats get less precise, the further they are from 0.

In fact, some numbers simply cannot be represented by a floating point.

Consider the following.

f = 0.1 + 0.2

print(f)

What does this print? You might guess 0.3, and you would be wrong.

print(f) # 0.30000000000000004

Numbers like 0.1 and many others, cannot be exactly represented by a float.

Floats are great for having larger range using the same number of bits, but they sacrifice precision.

If you need precision for decimals, consider using a Decimal type in your language, or using another method like an object to store two integers for the number and the location of the decimal point.

Conclusion 🔗︎

Floats are a great piece of technology. Knowing when is the right time to use them, is key to getting the most out of them.